Payam Parvizi

I am a PhD-trained Research Associate with 4+ years of experience developing and validating reinforcement learning methods for continuous control under real-world-motivated constraints. I am driven by a deep curiosity about how learning-based controllers can achieve the smooth, stable, and reliable behavior needed for safe deployment in physical systems.

I specialize in action/policy regularization and policy smoothing to reduce high-frequency oscillations and improve reliability, validated through the development of custom Gymnasium-compatible environments and simulation-to-real transfer. My work includes deployment on quadcopter platforms and applications in satellite-to-ground optical communications, conducted at the University of Ottawa under the supervision of Dr. Davide Spinello and Dr. Colin Bellinger, and in collaboration with Dr. Ross Cheriton at the National Research Council of Canada (NRC).

CV

Google Scholar

GitHub

LinkedIn

Email

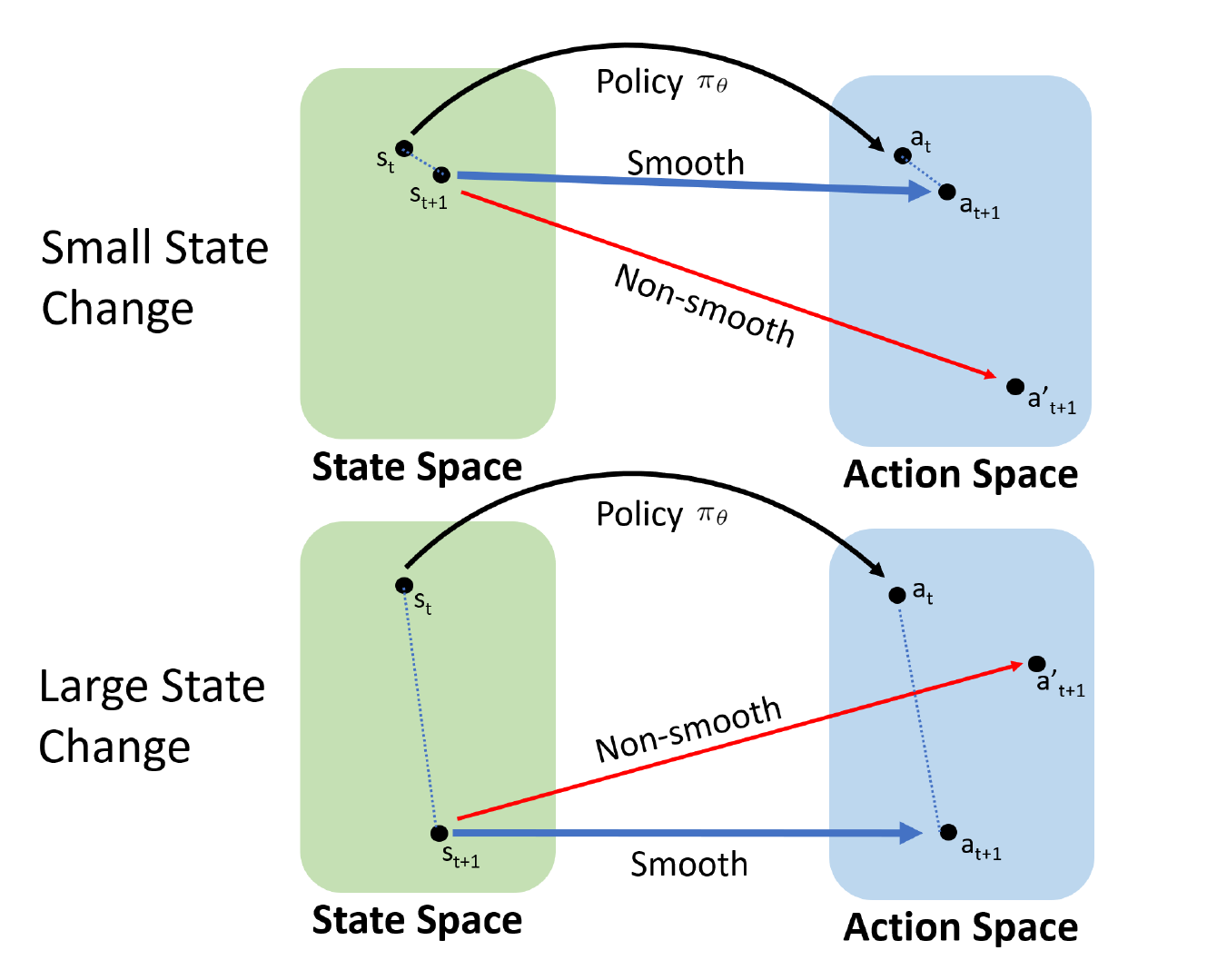

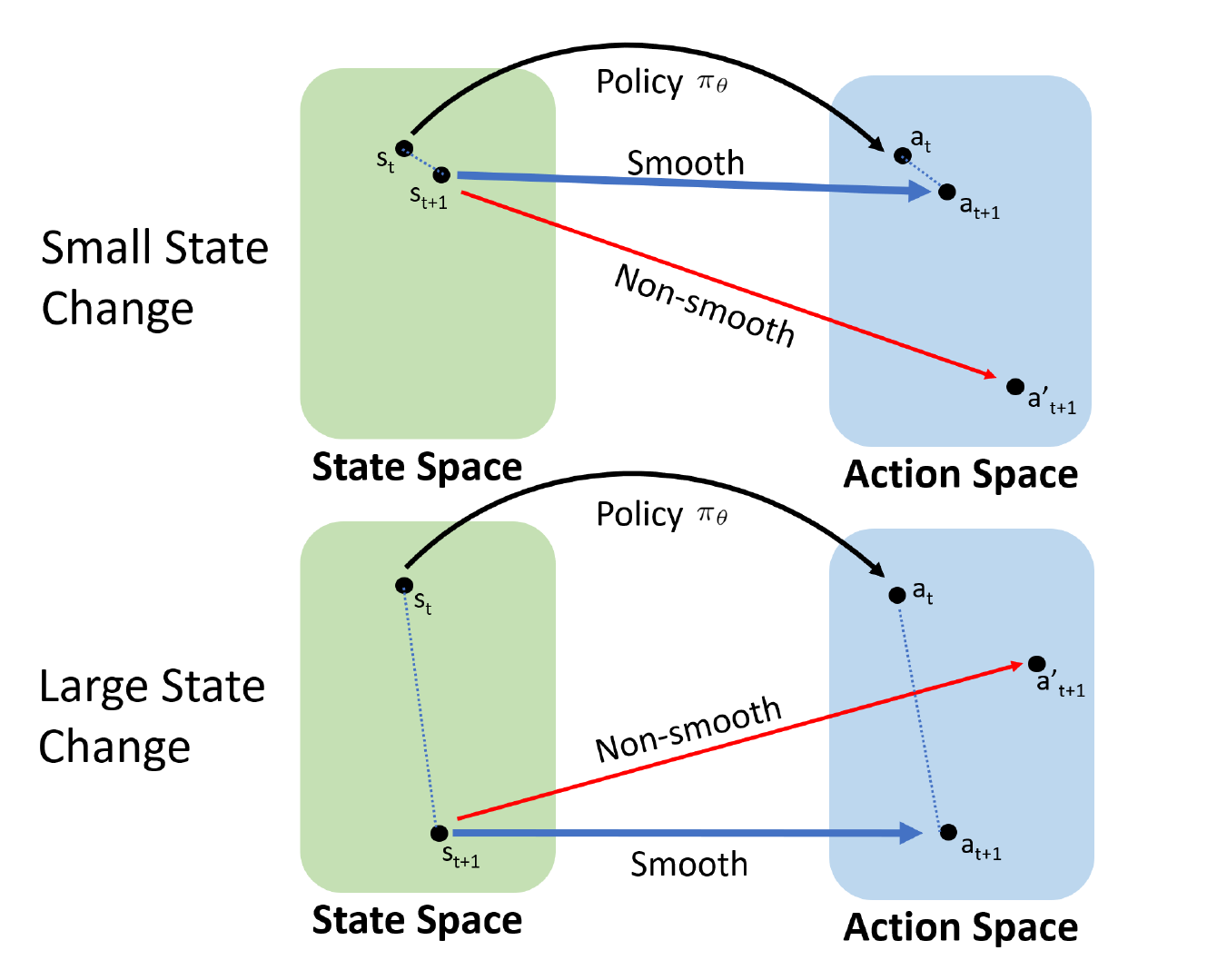

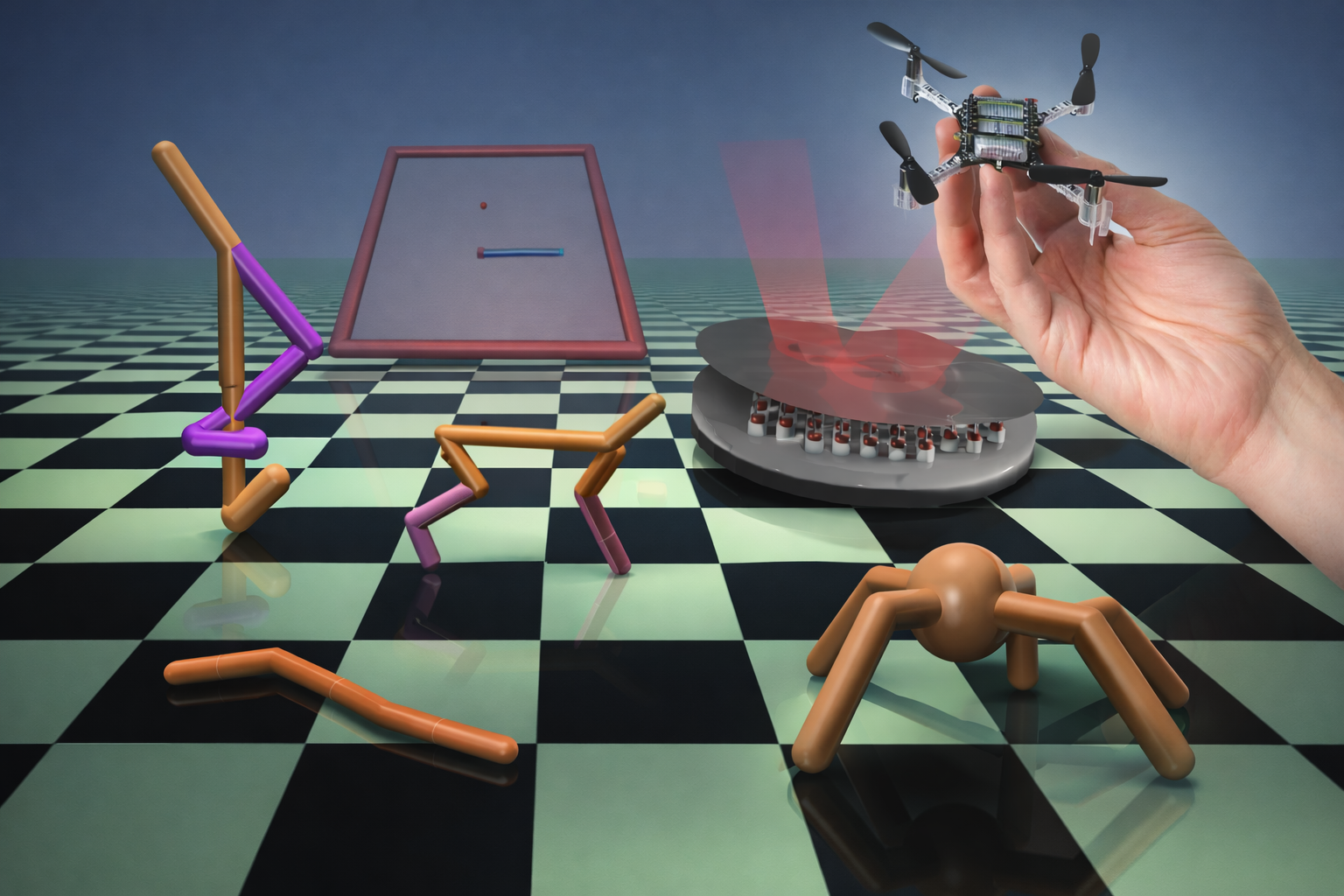

Adaptive Policy Regularization for Smooth Control in Reinforcement Learning

This work introduces State-Adaptive Proportional Policy Smoothing (SAPPS), a lightweight

policy regularization method that suppresses high-frequency action oscillations in continuous-control

reinforcement learning while preserving responsiveness to rapid state changes.

The method is validated across MuJoCo benchmarks, an adaptive optics system, and sim-to-real quadcopter experiments.

PhD research conducted at the University of Ottawa

in collaboration with the National Research Council of Canada (NRC).

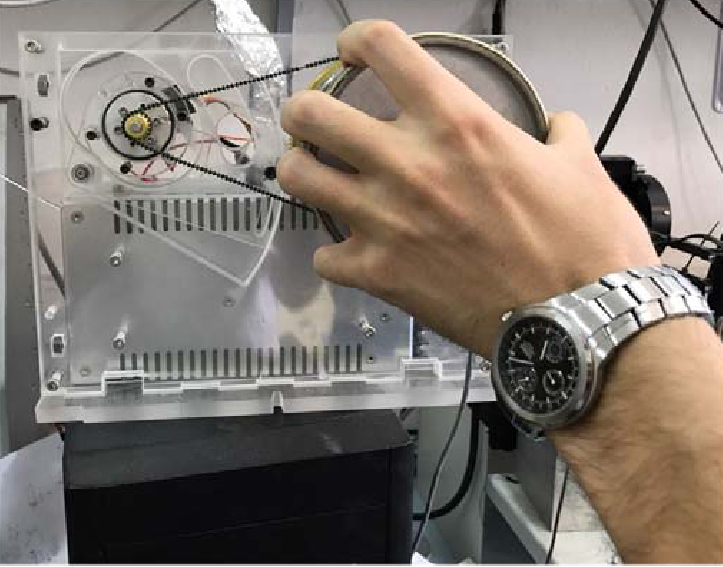

Sim-to-Real Reinforcement Learning for Quadrotor Control

This work develops a sim-to-real reinforcement learning framework for quadrotor control

using the Proximal Policy Optimization (PPO). A training is designed

for both simulation and real-world deployment on the Crazyflie 2.1 nano-quadrotor,

enabling stable hovering control.

[Code]

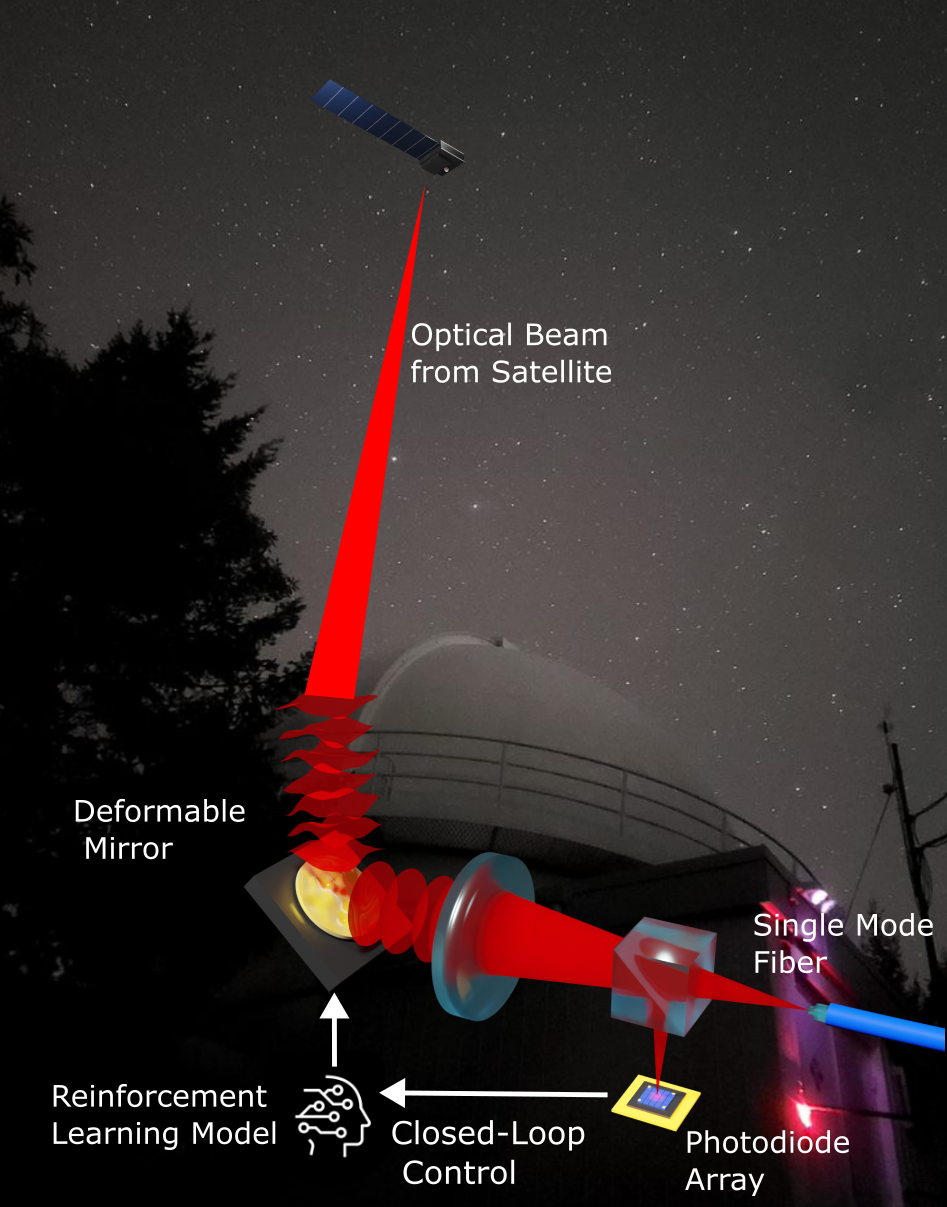

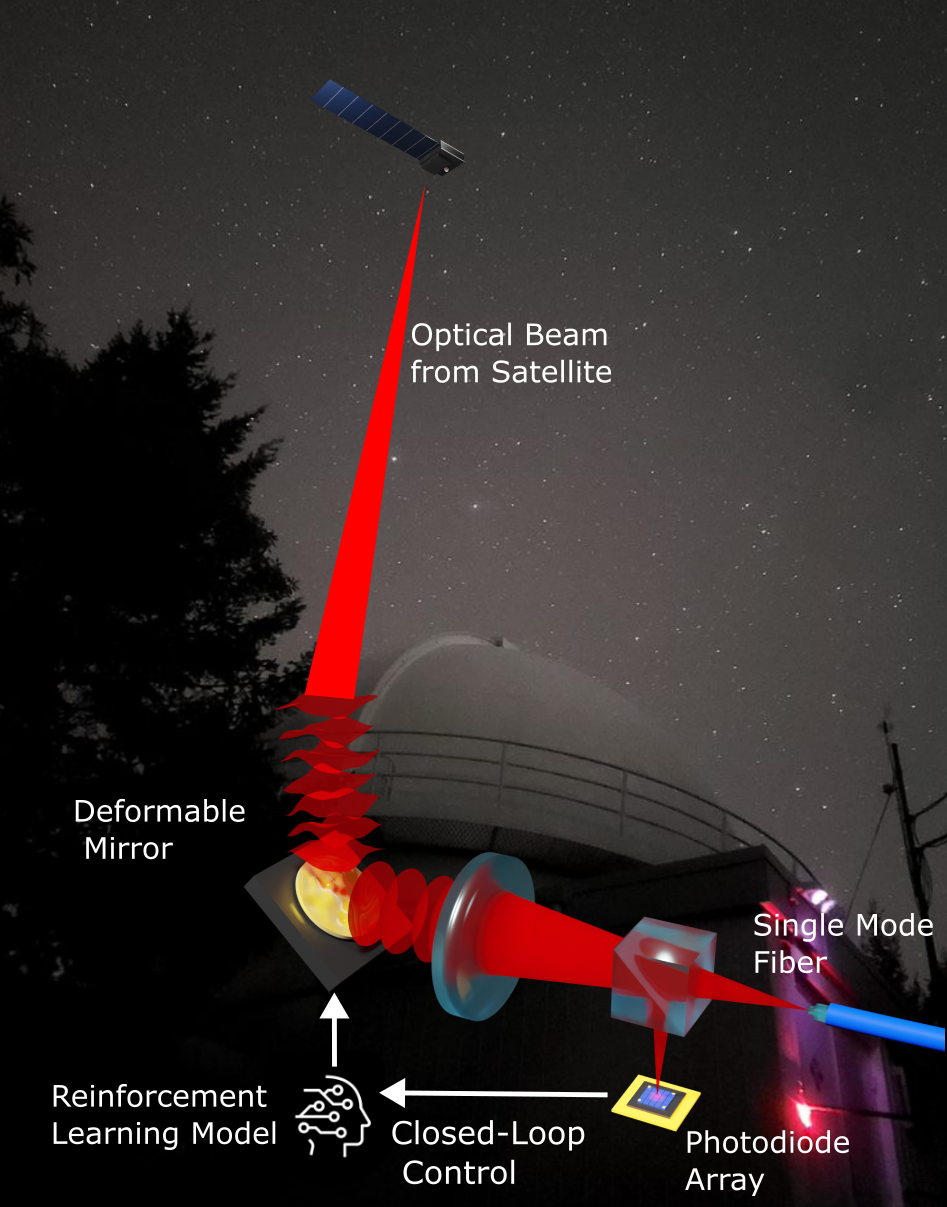

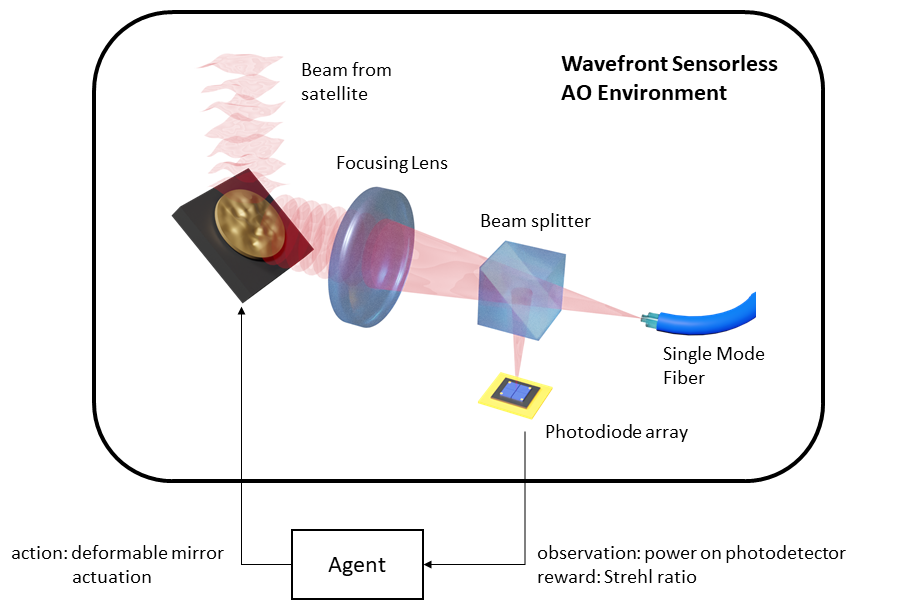

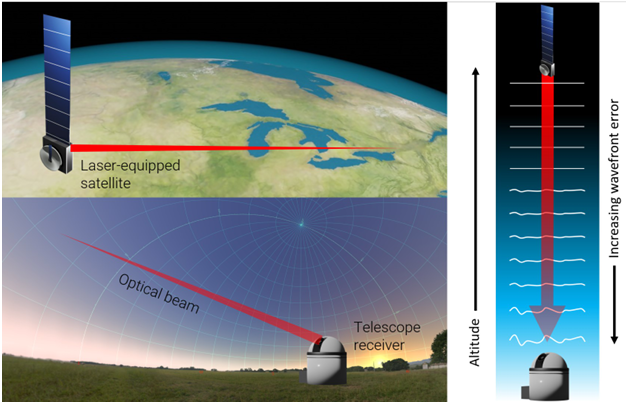

Reinforcement Learning for Wavefront Sensorless Adaptive Optics

This work develops a simulated wavefront sensorless adaptive optics (AO) reinforcement learning (RL) environment for

training and evaluating RL algorithms. It is the first AO–RL environment implemented using the OpenAI Gymnasium

framework, enabling reproducible benchmarking and analysis. The work also demonstrates, for the first time,

the potential of reinforcement learning for wavefront sensorless AO in satellite communication downlinks.

PhD research conducted in collaboration with the

National Research Council of Canada (NRC).

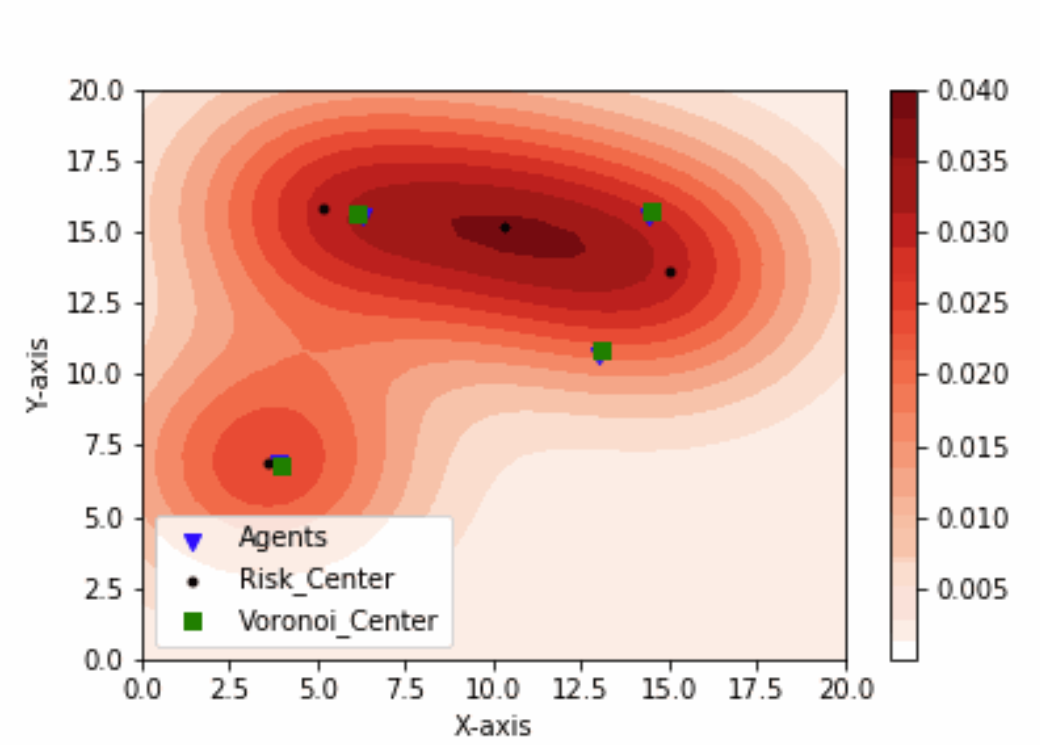

Multi-Robot Area Coverage Control with Repulsive Risk Density

This work formulates an area coverage control problem for multi-agent systems operating under

partial environmental information. Mobile targets are modeled as risk sources, and the risk

density is incorporated directly into the agent dynamics as a repulsive term, enabling online

motion planning in service robotics applications.

PhD Research in Multi-Robot Area Coverage, supervised by

Dr. Davide Spinello

at University of Ottawa.

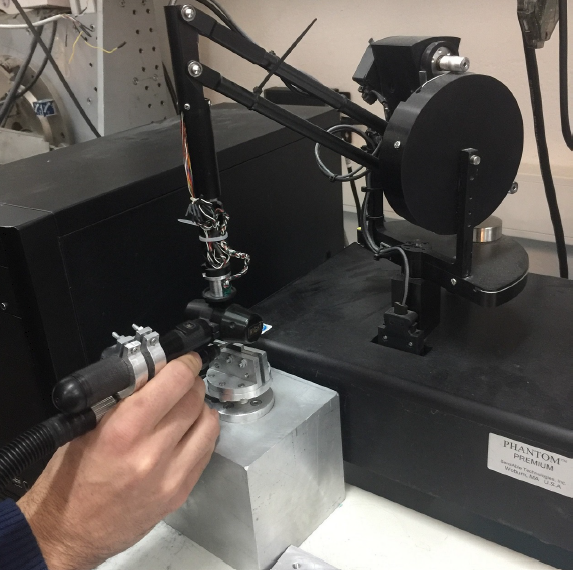

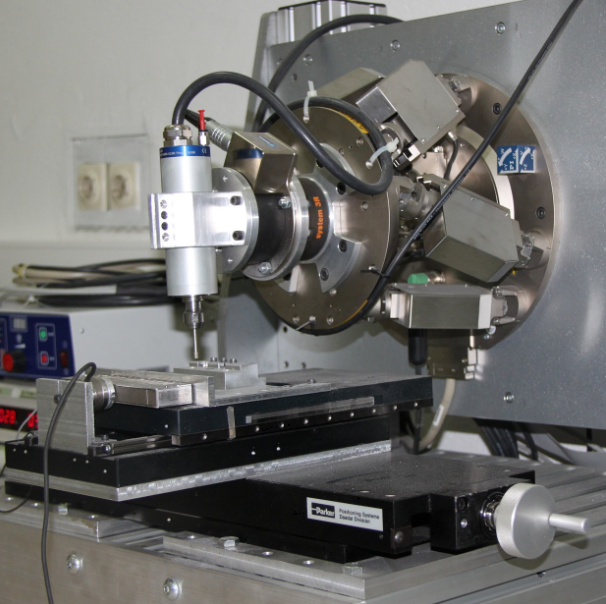

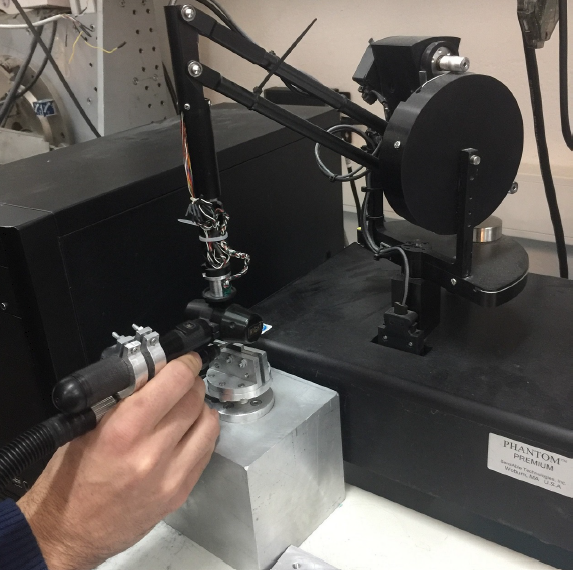

Robotic Deburring via Learning from Demonstration

This work presents a learning-from-demonstration approach for robotic deburring of

workpieces with unknown shapes. Task-relevant motions of a human expert were recorded

using a 6-DOF haptic device and encoded using augmented

Dynamic Movement Primitives (DMPs) to generate adaptable deburring trajectories.

M.Sc. research, part of the

“Development of a High-Precision Hybrid Robotic Deburring System”

project funded by TÜBİTAK.

Adaptive Policy Regularization for Smooth Control in Reinforcement Learning

Payam Parvizi, Abhishek Naik, Colin Bellinger, Ross Cheriton, Davide Spinello

Authorea, 2026. Submitted to IEEE Transactions on Automation Science and Engineering

Paper •

Code

Adaptive Policy Smoothing in Reinforcement Learning: Applications to Wavefront Sensorless Adaptive Optics and Robotics

Payam Parvizi

Ph.D. Thesis, University of Ottawa, 2025

Ph.D. Thesis

Action-Regularized Reinforcement Learning for Adaptive Optics in Optical Satellite Communication

Payam Parvizi, Colin Bellinger, Ross Cheriton, Abhishek Naik, Davide Spinello

Optica Open, 2025. In final production at Journal of the Optical Society of America B (Optica)

Paper •

Code

Reinforcement Learning Environment for Wavefront Sensorless Adaptive Optics in Single-Mode Fiber-Coupled Optical Satellite Communications Downlinks

Payam Parvizi, Runnan Zou, Colin Bellinger, Ross Cheriton, Davide Spinello

Photonics, vol. 10, no. 12, 2023 (MDPI)

Paper •

Code

Wavefront Sensorless Adaptive Optics for Free-Space Satellite-to-Ground Communication Using Reinforcement Learning

Runnan Zou, Payam Parvizi, Ross Cheriton, Colin Bellinger, Davide Spinello

COAT 2023

Paper

Demonstration of Robotic Deburring Process Motor Skills from Motion Primitives of Human Skill Model

Payam Parvizi

M.Sc. Thesis, Middle East Technical University (METU), 2018

M.Sc. Thesis

Dynamic Movement Primitives and Force Feedback: Teleoperation in Precision Grinding Process

Kemal Açıkgöz, Payam Parvizi, Abdulhamit Dönder, Musab Çağrı Uğurlu, Erhan İlhan Konukseven

IEEE International Conference on Electrical and Electronics Engineering (ELECO), 2017

Paper

Parametrization of Robotic Deburring Process with Motor Skills from Motion Primitives of Human Skill Model

Payam Parvizi, Musab Çağrı Uğurlu, Kemal Açıkgöz, Erhan İlhan Konukseven

IEEE International Conference on Methods and Models in Automation and Robotics (MMAR), 2017

Paper

2025

Poster Presentation — Photonics North (Ottawa, Canada)2023

Poster Presentation — Technology for the Digital Transformation of Society, University of OttawaWork Experience

-

Research Associate, University of Ottawa (Nov. 2025 – Present)

-

Research Assistant, University of Ottawa & NRC (Apr. 2022 – Sept. 2025)

-

Teaching Assistant, University of Ottawa (Jan. 2019 – Apr. 2022)

-

Project Assistant, Middle East Technical University & TÜBİTAK (Nov. 2015 – Nov. 2017)

-

R&D Intern, Mercedes-Benz Türk Inc. (Jun. 2013 - Aug. 2013)

-

Maintenance Engineering Intern, Turkish Airlines Technic (Jan. 2012 - Mar. 2012)

Education

-

Ph.D. in Mechanical Engineering, University of Ottawa (2025)

-

M.Sc. in Mechanical Engineering, Middle East Technical University (2018)

-

B.Sc. in Aerospace Engineering, Middle East Technical University (2014)

Teaching Experience

- MCG 3307: Control Systems (Winter 2019, 2020, 2021 & 2022)

- MCG 3306: System Dynamics (Fall 2020 & 2021)

- MCG 3305: Biomedical System Dynamics (Fall 2019)

Certifications

-

MITx MicroMasters — Statistics and Data Science (2019 - 2020)

- Machine Learning with Python

- Fundamentals of Statistics

- Probability